In the past few week, I’ve had dozens of people come to me and question the feasibility of the AI ecosystem. Here’s the summary →

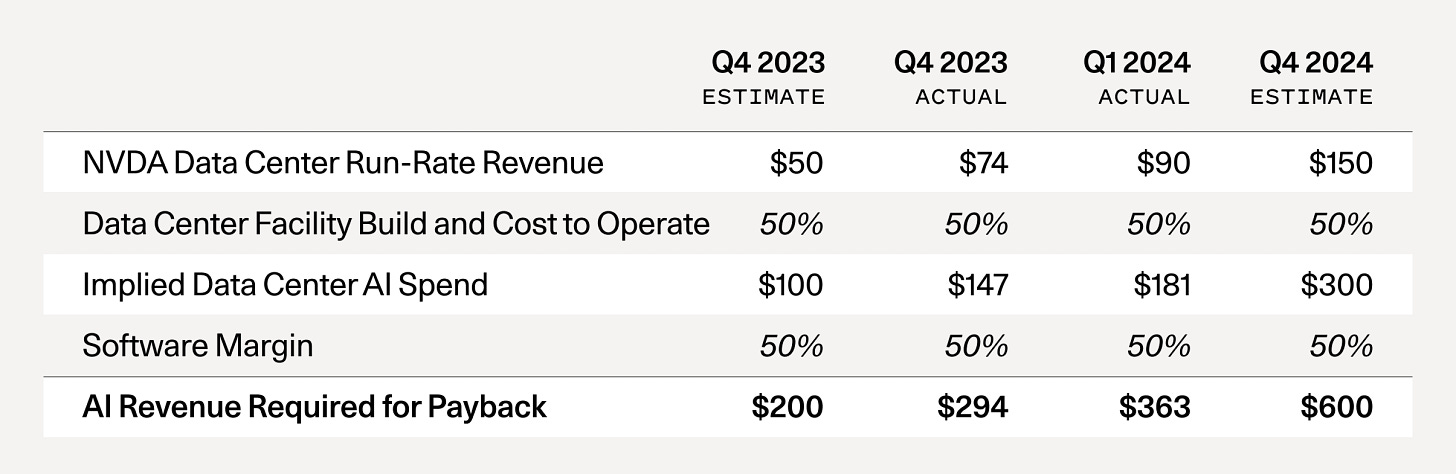

In recent weeks, I've had dozens of people come to me and question the feasibility of the AI ecosystem. This skepticism stems from Sequoia’s famouse article, “AI’s $600B Question” highlighting the discrepancy between GenAI expenses and current revenue. For reference, here’s the original article.

The AI Revenue Gap

The crux of the argument is this:

AI training expenses are exceptionally high.

To support this level of capital expenditure, GenAI applications should be generating nearly $600 billion in revenue.

Even the most optimistic estimates place current revenue around $100 billion.

This leaves a staggering $500 billion "hole" in revenue.

This leads to an obvious question: Is AI sustainable?

My Perspective

1. Unprecedented Growth

Unlike past tech cycles (such as Crypto or Mobile), the AI revolution isn't driven solely by future potential. It's fueled by real, substantial dollars being exchanged:

Large cloud providers are paying significant sums to companies like Nvidia.

Customers are shelling out considerable amounts to use AI softwares (OpenAI seems to be making over 1Bil USD from ChatGPT).

While the speed of spending increase is unprecedented (barring the infrastructure build-up before the dot-com bubble), the pace of revenue growth is equally remarkable. Consider this: GenAI has been in the spotlight for barely 18 months, yet it's already approaching a $100 billion revenue rate. That's nothing short of incredible!

2. Unit Economics

In most cases, GenAI is profitable on a per-inference cost basis. While I haven't conducted detailed calculations, I believe that companies like OpenAI, Anthropic aren't losing money when an average ChatGPT paying customer makes an inference call. (I could be wrong, but this is my current understanding.)

3. Training vs. Inference Costs

The majority of the $600 billion figure likely represents training costs and associated expenses like synthetic data generation, not inference costs. Two phenomena are occurring simultaneously:

As GenAI consumption increases, inference is increasingly moving to the edge, which will help keep costs down.

Small Language Models (SLMs) are growing in intelligence faster than Large Language Models (LLMs). This trend could lead to more efficient and cost-effective AI solutions in the future.

4. The Future Landscape

Today, most of the large companies are training their own foundation models. This might not be the best allocation of resources. The AI ecosystem may eventually reach an equilibrium where:

A handful of companies train their own foundational models.

These models are then licensed to others for fine-tuning or general commercial access.

Currently, the world gains most value from three primary LLMs: OpenAI, Anthropic, and Llama. If the industry consolidates around a few core model providers, with others focusing on fine-tuning and specialized applications, we could see training costs plummet. This, by itself could solve the problem in costs Sequoia mentioned.

Of course, as Mark Zuckerberg recently pointed out, no one wants to be left behind in what could be the next major tech platform. This explains why many companies are willing to incur short-term losses to stay in the game.

General Valuation Concerns in the startup space

It's worth noting that some current AI valuations are indeed inflated, and there might be a valuation bubble. Expectations for short-term AI revenue from public companies are unrealistic.

Post-ChatGPT, we've seen an explosion of new AI companies. However, in my opinion, most of these companies should be valued like SaaS companies rather than deep-tech firms.

Conclusion

Personally, I'm not overly concerned about the current state of AI spending. While we may be in a phase of minor overvaluation, I don't believe we're in an AI bubble.

Consider this: By the end of the year, with the launch of Apple's AI features in the iPhone 16 and Meta's integration of LLMs across its platforms, over half a billion people will be using LLM capabilities in some form. For a technology less than two years old, that's remarkably fast adoption!

The AI revolution is here, and it's moving at an unprecedented pace.